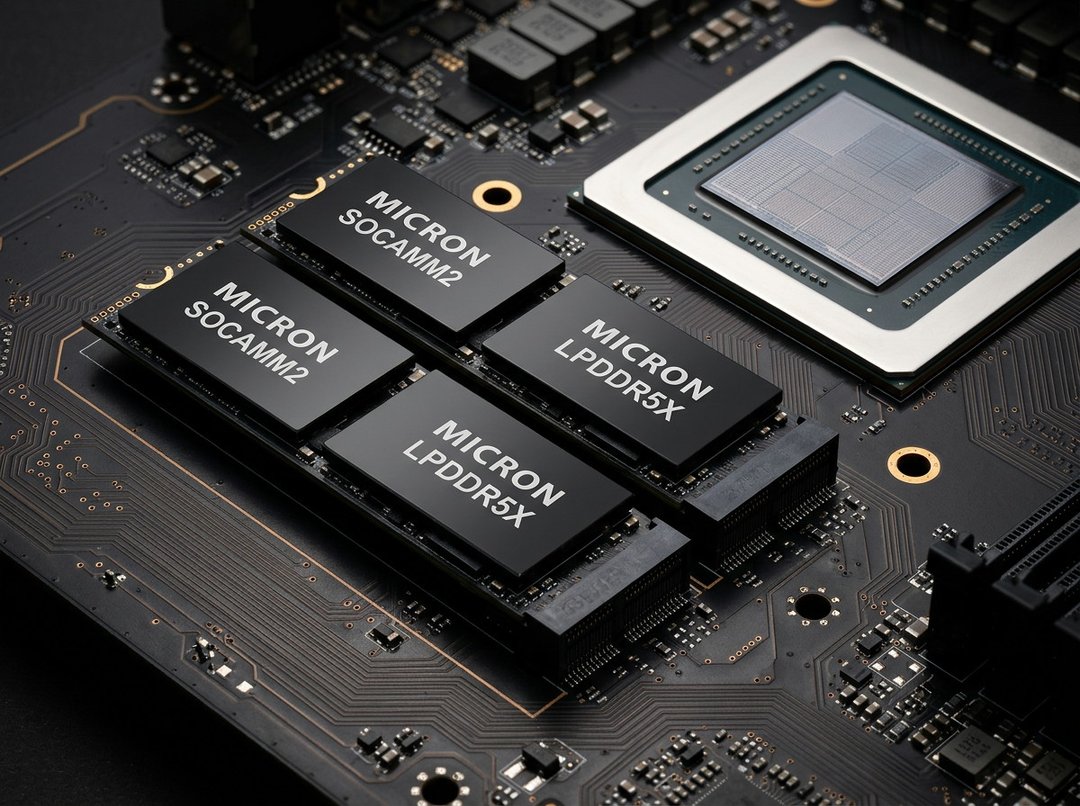

256GB SOCAMM2 Built with 64 32GB LPDDR5X Chips

AI workloads are hungry. Not “a little extra RAM” hungry. I mean massive memory pool hungry. Large language models and modern inference pipelines just keep stretching the limits, and traditional server memory designs are starting to feel tight.

Micron’s 256GB SOCAMM2 memory module is a direct response to that pressure. It’s built using 64 monolithic 32GB LPDDR5X chips, tightly packed into a dense LPDRAM module. That density matters. Because when you’re running contemporary AI workloads, memory footprint isn’t a side concern — it’s often the bottleneck.

This module isn’t just bigger for the sake of it. It’s designed for data center systems where capacity, bandwidth, and power efficiency all pull on each other. You don’t get to optimize one without thinking about the others. And Micron is clearly aiming at that balance.

Scaling AI Server Memory Capacity to 2TB per CPU Platform

Here’s where it gets kind of wild.

Install eight of these 256GB SOCAMM2 modules into an eight-channel server CPU, and you can reach 2TB of LPDRAM in a single processor configuration.

Two terabytes. Attached directly to the CPU.

That’s a significant jump over the previous 192GB module generation — roughly a one-third increase in capacity. And that extra headroom isn’t academic. It allows AI systems to handle larger context windows and more demanding inference tasks without choking on memory limits.

For hyperscalers building AI infrastructure at scale, that kind of jump changes design decisions. You’re not constantly working around memory ceilings. You can architect for growth instead of compromise.

Power Efficiency Compared to Traditional RDIMMs

Capacity alone doesn’t win in the data center. Power does. Cooling does. Rack density does.

Micron says this 256GB LPDRAM module consumes about one-third the power of comparable RDIMMs while using only one-third of the physical footprint. That’s not a small tweak. That’s a shift in how much infrastructure you need to support the same — or greater — performance.

Lower energy demand means:

- Reduced thermal load

- Lower cooling requirements

- Higher rack density

- Decreased infrastructure costs

In large deployments, even modest efficiency gains compound fast. So cutting memory power draw this dramatically isn’t just nice to have — it directly impacts operating costs.

In standalone CPU workloads, the LPDRAM configuration reportedly delivers more than 3× better performance per watt compared with mainstream server memory modules. That performance-per-watt figure is what hyperscalers obsess over. It’s how you scale AI without watching energy bills explode.

SOCAMM2 Modular Architecture and Liquid-Cooled AI Servers

The SOCAMM2 architecture follows a modular design. That’s important for two reasons: maintenance and future expansion.

Data centers aren’t static. Memory requirements grow as model complexity increases and datasets expand. A modular format makes upgrades and servicing more manageable. You’re not rebuilding entire systems just to expand memory capacity.

The format also supports liquid-cooled server systems — increasingly common in high-density AI deployments. When you’re packing this much memory and compute into tight spaces, advanced cooling isn’t optional. It’s survival.

This smaller module size, combined with reduced power consumption, allows higher rack density in large-scale data centers. More compute. More memory. Same or smaller footprint. That’s how hyperscale infrastructure evolves.

Impact on AI Inference and Time-to-First-Token Performance

Memory capacity doesn’t just affect how much you can store. It affects how fast systems respond.

Micron reports more than a 2.3× improvement in time-to-first-token during long-context inference when the module is used for key value cache offloading under unified memory architectures.

If you’ve ever waited for an AI system to start responding, you know that first token matters. It sets the tone for the entire interaction. Cutting that delay by more than half can materially improve user experience and system responsiveness.

This becomes especially relevant as inference workloads grow larger and more context-heavy. The more context you retain, the more memory pressure you create. And this is exactly where high-capacity LPDRAM modules start to make sense.

LPDRAM Portfolio and Availability

The 256GB SOCAMM2 module sits at the top of Micron’s LPDRAM portfolio.

The broader lineup includes:

- Components ranging from 8GB to 64GB

- SOCAMM2 modules from 48GB up to 256GB

Customer samples of the 256GB SOCAMM2 module are already shipping, signaling that this isn’t just a roadmap announcement. It’s moving into real-world deployment.

For AI infrastructure builders, that availability matters. Because scaling plans don’t wait.