The Squeeze Is Real, and It's Getting Worse

If you're running an AI startup right now, you've probably felt it. That creeping dread when you try to spin up more compute and hit a wall — longer wait times, steeper prices, minimum commitments that feel designed for companies ten times your size. It's not in your head. Microsoft and Amazon are actively funneling Nvidia GPU stockpiles toward their own internal teams and their biggest enterprise clients first. Everyone else? They're waiting in line.

And that line isn't getting shorter anytime soon. Microsoft employees reportedly expect GPU wait times for cloud customers to stretch through at least the end of 2026. That's not a blip — that's a structural problem.

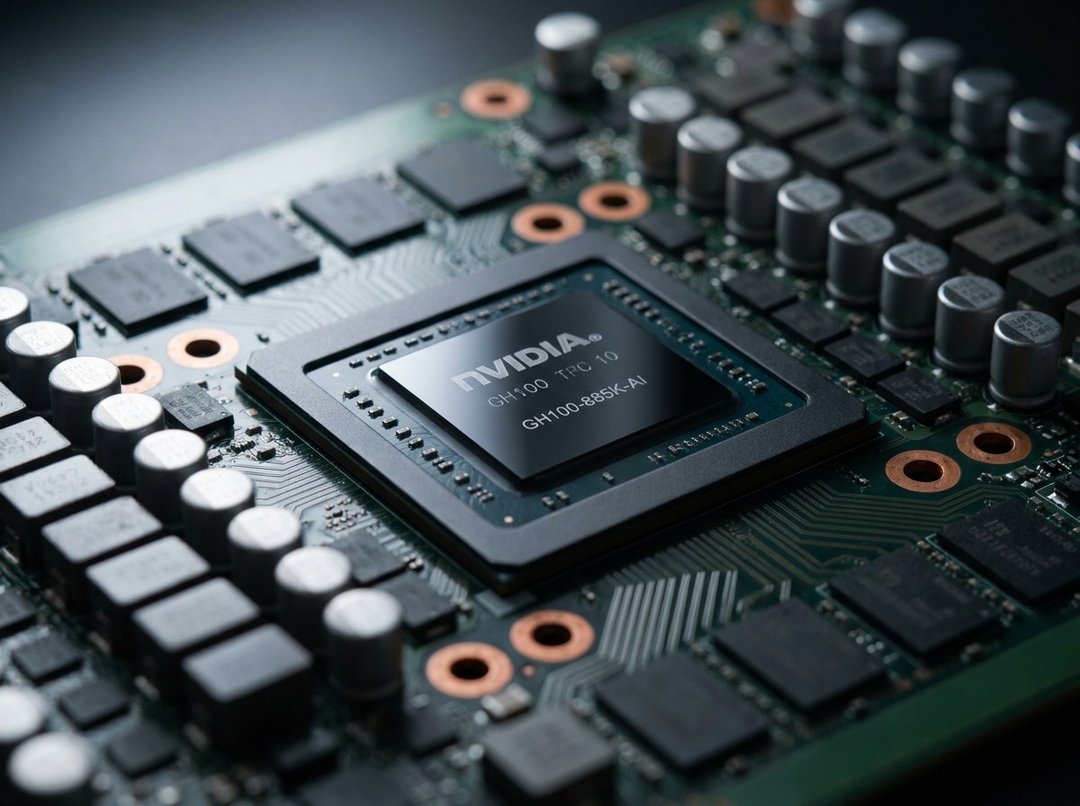

GPU Rental Prices Are Climbing Fast

Here's a concrete number that should stop you in your tracks: according to SemiAnalysis, one-year rental contracts for Nvidia's H100 GPU jumped nearly 40% in just six months — from $1.70 per hour in October 2025 to $2.35 per hour by March 2026.

Will Falcon, CEO of neocloud provider Lightning AI, put it plainly: prices on his platform rose more than 25% over the same period, going from around $1.60 per hour to over $2.

That's not gradual inflation. That's a market under serious pressure.

Microsoft's Blackwell Chip Requirements Are Eye-Watering

Want access to Nvidia's latest Blackwell chips through Microsoft? You'll need to commit to leasing at least 1,000 chips for a minimum of one year. We're talking contract values that reach into the tens of millions of dollars. For smaller startups, that's not a barrier — it's a wall.

And even if you're after older-generation Nvidia chips, you're looking at waiting periods measured in weeks to months, not days.

The "Use It or Lose It" Problem

There's another layer here that's genuinely harsh. Microsoft has a policy where it tracks GPU utilization and will revoke access — yes, even from startups receiving free credits — if servers sit idle for even a few hours. So you're paying more, waiting longer, and then getting penalized if you don't use every single cycle perfectly. That's a brutal operating environment for any team still figuring out their training workflows.

Why This Feels So Familiar

If this reminds you of early 2023, that's because it basically is. Back then, cloud providers redirected resources toward internal projects and major clients like OpenAI, leaving everyone else to scramble. But the people close to this situation say the current crunch is actually more acute — driven by explosive demand for AI coding tools and surging compute needs from large developers.

There's also a timing problem specific to right now: many AI startups are watching two- to three-year cloud contracts expire. That expiration gives providers real leverage — either accept higher prices, or lose your capacity allocation to a bigger spender. Microsoft has reportedly formalized this dynamic by segmenting its cloud customers into tiers, with roughly 1,000 top-spending clients getting priority GPU access. If you're not in that group, you feel it.

The Idle GPU Paradox

Here's where it gets almost absurd. A Cast AI report found that 95% of provisioned GPU capacity across thousands of organizations just... sits there. Unused. Companies are overbuying chips out of fear of losing access, and the result is a market where everyone's hoarding and almost no one is actually using what they have.

So startups are fighting for scraps while a huge chunk of existing compute collects dust. It's a FOMO-driven arms race that's making the underlying problem worse for everyone except the cloud providers collecting the rent.

What VCs and Founders Are Actually Doing About It

Hemant Taneja, managing partner at General Catalyst, has been directly surveying founders in the firm's portfolio about their compute access. His verdict: GPU availability is "one of the biggest bottlenecks this year." The firm is exploring shared computing pools and direct negotiations on behalf of startups — echoing the playbook some VCs ran during the 2023 shortage.

General Catalyst isn't alone. Sequoia Capital, Founders Fund, and Andreessen Horowitz all have portfolio companies caught in this crunch, which means there's real VC-level pressure building to find workarounds.

Going Direct: Buying Instead of Renting

Some founders are seriously considering skipping cloud providers altogether and just buying GPUs outright. It's a big upfront cost, and it comes with its own headaches. But when cloud rental premiums from hyperscalers are running roughly double to quadruple the rates offered by specialized providers, the math starts to shift. Owning starts to look less crazy than renting at those markups.