AWS and Cerebras Collaboration to Accelerate AI Inference

Amazon Web Services (AWS) and Cerebras Systems have announced a strategic collaboration to deploy Cerebras CS-3 systems within AWS data centers. The partnership integrates Cerebras’ wafer-scale AI processors with Amazon’s custom Trainium chips to deliver ultra-fast AI inference in the cloud. The combined service will be available through Amazon Bedrock and is expected to launch in the coming months.

This initiative is designed to address one of the most pressing challenges in artificial intelligence: inference speed. By merging specialized hardware with AWS’s cloud infrastructure, the companies aim to provide the fastest AI inference available, particularly for demanding, real-time applications.

Inference Disaggregation: A New Architecture for AI Performance

Splitting AI Workloads for Maximum Efficiency

At the core of the partnership is a technique known as inference disaggregation. This architecture divides AI inference into two distinct phases, assigning each phase to the processor best optimized for the task.

- Prefill Phase: AWS Trainium handles the computationally intensive task of processing the user’s input prompt.

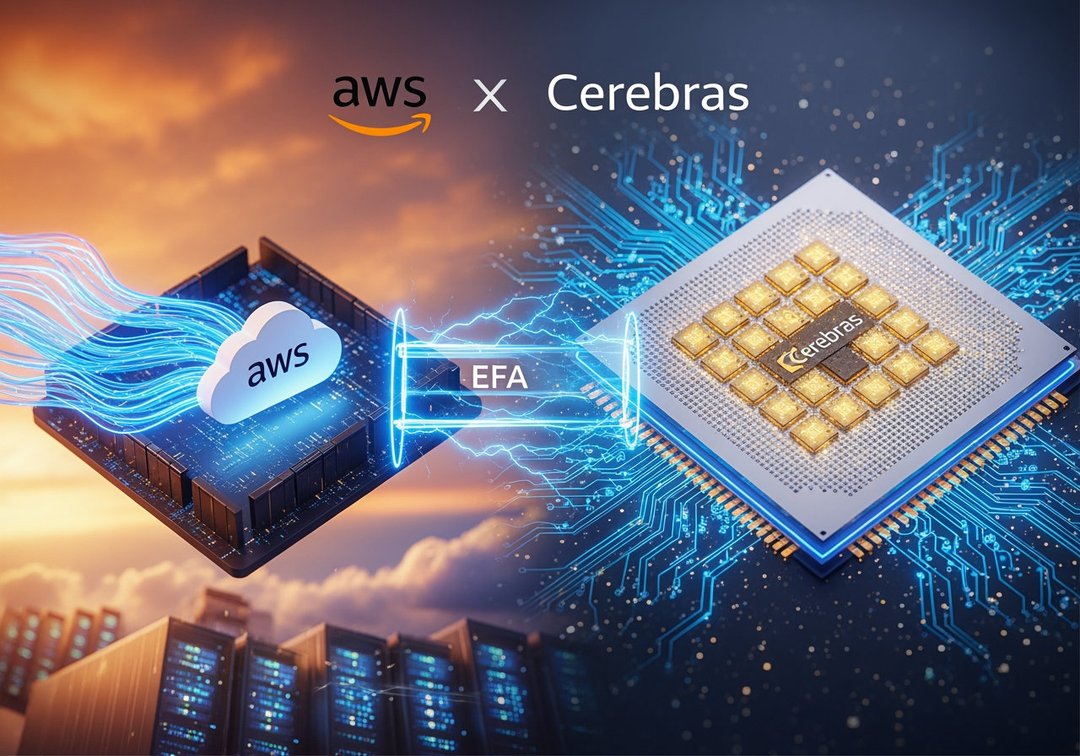

- Decode Phase: The processed data is transmitted via Amazon’s high-speed Elastic Fabric Adapter (EFA) networking to the Cerebras CS-3 system, which generates output tokens at extremely high speeds.

By allocating tasks based on hardware specialization, the system increases efficiency and performance across the inference pipeline.

Elastic Fabric Adapter Enables High-Speed Data Transfer

Amazon’s Elastic Fabric Adapter plays a critical role in connecting Trainium and CS-3 systems. The low-latency, high-bandwidth networking ensures seamless data transfer between the prefill and decode stages. This tight integration allows each system to operate at peak capability without introducing bottlenecks.

Thousands of Times Faster Than GPU-Based Alternatives

Cerebras states that the CS-3 system can generate output tokens at speeds thousands of times faster than traditional GPU-based alternatives during the decode phase. According to the company, this architecture enables a fivefold increase in high-speed token capacity within the same hardware footprint, delivering significantly greater throughput without expanding infrastructure.

Cerebras CS-3 and Wafer-Scale Engine Technology

Wafer-Scale Engine: 900,000 AI Cores on a Single Chip

Cerebras’ competitive advantage stems from its Wafer-Scale Engine processors. These dinner plate-sized chips contain:

- 900,000 AI cores

- 4 trillion transistors

This architecture allows Cerebras systems to specialize in high-speed inference workloads, differentiating them from traditional GPU clusters.

Proven Enterprise and Hyperscale Deployments

Cerebras already provides inference capabilities for major AI players including OpenAI, Cognition, and Meta. In January, the company signed an agreement exceeding $10 billion to supply 750 megawatts of computing capacity to OpenAI over three years.

The AWS partnership extends Cerebras’ reach by embedding its hardware directly into the world’s largest cloud ecosystem, offering global scalability and enterprise accessibility.

Strategic Positioning Ahead of IPO Plans

Cerebras closed a $1 billion Series H funding round at a valuation exceeding $22 billion. Reports indicate the company is preparing for an IPO in 2026. By integrating with AWS, Cerebras gains access to AWS’s extensive customer base, accelerating adoption and strengthening its market position ahead of a potential public listing.

Amazon Bedrock Integration and Model Support

Access to Open-Source and Amazon Nova Models

The ultra-fast inference service will be delivered through Amazon Bedrock. Later this year, AWS plans to offer leading open-source large language models and Amazon’s Nova models running directly on Cerebras hardware.

This integration allows customers to leverage high-speed inference within existing AWS environments, reducing migration complexity while enhancing performance.

Flexible Deployment Options for AI Workloads

AWS will support both the new disaggregated configuration and traditional inference setups. Customers can route workloads to the configuration that best fits their operational requirements, whether prioritizing speed, cost efficiency, or architectural familiarity.

Industry Shift Toward High-Speed AI Inference

From Model Training to Inference Optimization

The collaboration reflects a broader shift in the AI industry. As large language models mature, the competitive focus is moving from training capabilities to inference performance — where AI systems generate outputs in real-world applications.

Inference is increasingly viewed as the phase where AI delivers tangible business value, particularly in interactive and production-grade deployments.

Agentic Coding Tools Drive Token Demand

Emerging AI applications are intensifying the need for faster token generation. Agentic coding tools, for example, generate roughly 15 times more tokens per query than conversational chat systems. These high-output workloads require significantly greater inference throughput to maintain real-time responsiveness.

By combining Trainium’s prefill processing with Cerebras’ ultra-fast decode performance, AWS and Cerebras aim to eliminate inference bottlenecks for these demanding use cases.

Enabling Real-Time AI Applications at Scale

Real-time coding assistance, interactive applications, and other latency-sensitive workloads depend on rapid token generation. According to AWS leadership, splitting the inference workload allows each system to operate where it performs best, maximizing speed while maintaining cloud-scale flexibility.

The result is an architecture engineered to meet the growing global demand for faster AI inference within existing AWS environments.