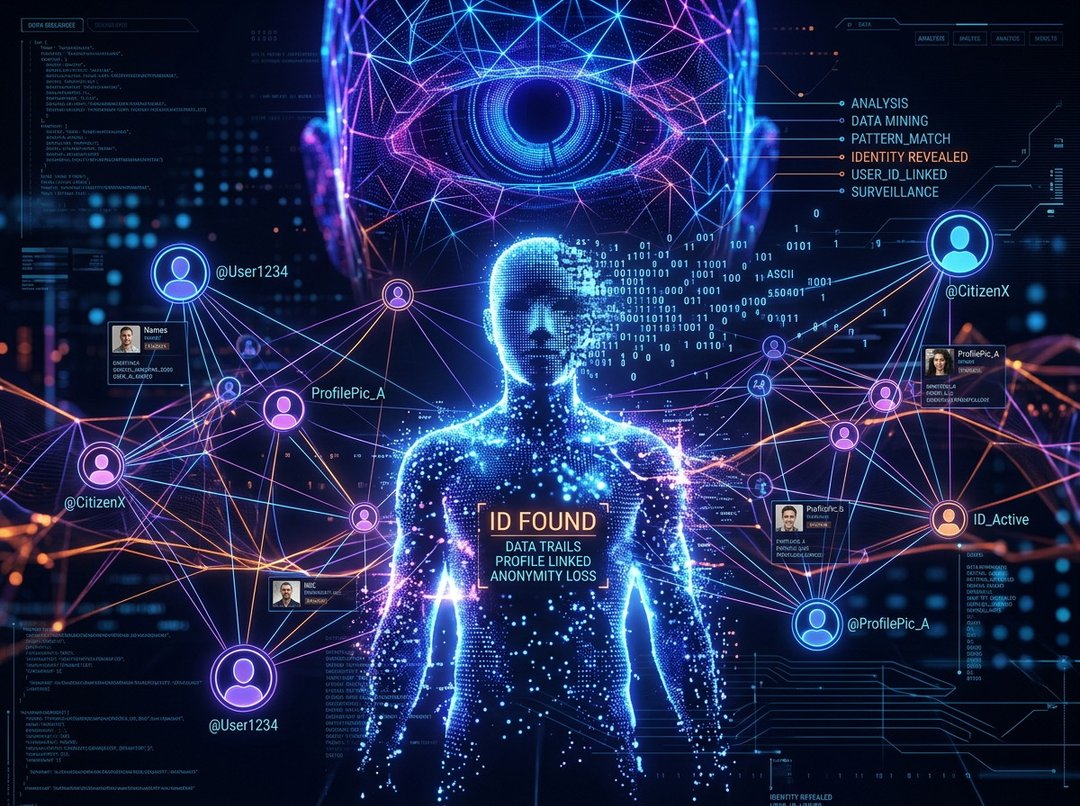

You know that quiet comfort of being a little anonymous online?

Like… you can post, comment, explore ideas — and there’s this thin veil between your real name and your username. It’s not perfect privacy. But it feels like enough.

And that’s exactly what new research from scientists at Anthropic and ETH Zurich is quietly shaking.

They’re arguing that this sense of “practical obscurity” — the idea that your identity is technically public somewhere but too scattered to piece together — may not hold up much longer. Because AI doesn’t get tired. It doesn’t miss patterns. And it’s getting very good at connecting dots.

How AI Can De‑Anonymize Online Accounts at Scale

The research, titled Large-scale online deanonymization with LLMs, explores how modern AI systems can automate something that used to require serious manual effort: deanonymization.

That’s the process of linking an anonymous or pseudonymous account back to a real-world identity.

Traditionally, this kind of investigation meant humans combing through posts, analyzing writing style, chasing digital breadcrumbs across platforms. Slow. Expensive. Skilled work.

Now? AI agents can do much of that automatically.

They can scan massive volumes of public content. Compare writing patterns. Cross-reference clues scattered across platforms. And do it in minutes instead of weeks.

Here’s what really stands out: researchers estimate that identifying an online account through their experimental pipeline could cost between $1 and $4 per profile.

Let that sink in.

If deanonymization becomes that cheap, large-scale identification isn’t just possible. It’s practical.

And that’s the shift.

The Collapse of “Practical Obscurity”

For years, anonymity online hasn’t depended on perfect secrecy. It’s depended on friction.

Your posts might be public. Your old usernames might be searchable. But pulling everything together into one clear identity? That took time and effort.

That barrier — that inconvenience — protected people.

The study suggests AI is lowering that barrier dramatically.

When automated systems can quickly connect digital clues across platforms, the cost and effort of exposing anonymous accounts drops. And once something becomes cheap and scalable, it becomes powerful.

That doesn’t automatically mean misuse. But it changes the equation.

It means the default protection we relied on — “no one will bother digging that deep” — may not apply in an AI-driven environment.

AI Agents and Automated Deanonymization

What makes this research especially significant is the role of AI agents.

We’re not talking about a single model answering a prompt. We’re talking about systems that can:

- Search for related accounts

- Analyze writing styles

- Compare linguistic patterns

- Follow connections across multiple online spaces

And do it autonomously.

In other words, AI isn’t just assisting investigators. It’s potentially running the entire investigative pipeline.

That automation is what makes large-scale deanonymization realistic.

When you combine pattern recognition, language modeling, and cross-platform analysis, you get something that can identify trends humans would miss — especially across thousands or millions of accounts.

Privacy Risks in the Age of Large Language Models

As AI systems become better at analyzing massive volumes of online content, the tension becomes clear.

On one hand, AI-driven discovery can be powerful. It can uncover fraud. Expose coordinated manipulation. Detect harmful behavior.

On the other hand, it can erode privacy protections that weren’t built to withstand this level of automation.

The study highlights this growing challenge: balancing the expanding capabilities of AI with the need to protect personal privacy in the digital age.

Because once tools exist that can mass-expose anonymous accounts, the question isn’t just can they be used responsibly.

It’s who controls them. And under what safeguards.

Potential Solutions to AI-Driven Deanonymization

The researchers point toward possible responses rather than simple alarm.

Some of the proposed directions include:

Improved Privacy Tools

Stronger privacy technologies could help users limit the digital trails that AI systems analyze.

If less identifiable data is publicly available, there’s less for automated systems to connect.

Stronger Platform Safeguards

Platforms themselves may need to rethink how they handle user data visibility.

That could mean better anonymization practices or structural protections that make cross-platform linkage harder.

AI Systems Designed to Protect Privacy

Ironically, AI may also be part of the solution.

Systems could be designed to anonymize sensitive data before it becomes publicly accessible. Instead of amplifying exposure, AI could reduce identifiability.

That’s a critical pivot — using AI defensively, not just analytically.

The Broader Implication for Online Identity

What this research really forces us to confront is simple: anonymity online was never absolute.

It relied on fragmentation. On noise. On the fact that connecting everything was too much work.

AI changes that.

When machines can synthesize enormous amounts of scattered information quickly and cheaply, identity becomes easier to reconstruct.

And that raises bigger questions about how we define privacy in an era where pattern recognition is automated at scale.

It’s not just about hiding your name. It’s about whether digital traces can be meaningfully separated anymore.