The surgeon's hand trembled slightly as she guided the robotic arm through a delicate procedure—except she wasn't in the operating room. She was 200 miles away, controlling the surgery remotely through a console. One second of delay could mean the difference between success and catastrophe. This isn't science fiction; it's happening today, powered by edge data centers that process commands in milliseconds rather than seconds.

Welcome to the world where every millisecond matters, and traditional cloud computing just isn't fast enough anymore.

The Speed Problem Nobody Talks About

We've grown accustomed to instant gratification online—streaming videos, loading web pages, sending messages. But there's a hidden bottleneck in our digital infrastructure that becomes glaringly obvious when applications demand split-second responses.

Traditional cloud data centers, often located hundreds or thousands of miles from users, introduce latency—the time it takes for data to travel back and forth. For browsing social media, a 100-millisecond delay is barely noticeable. For an autonomous vehicle making a life-or-death decision? That's an eternity.

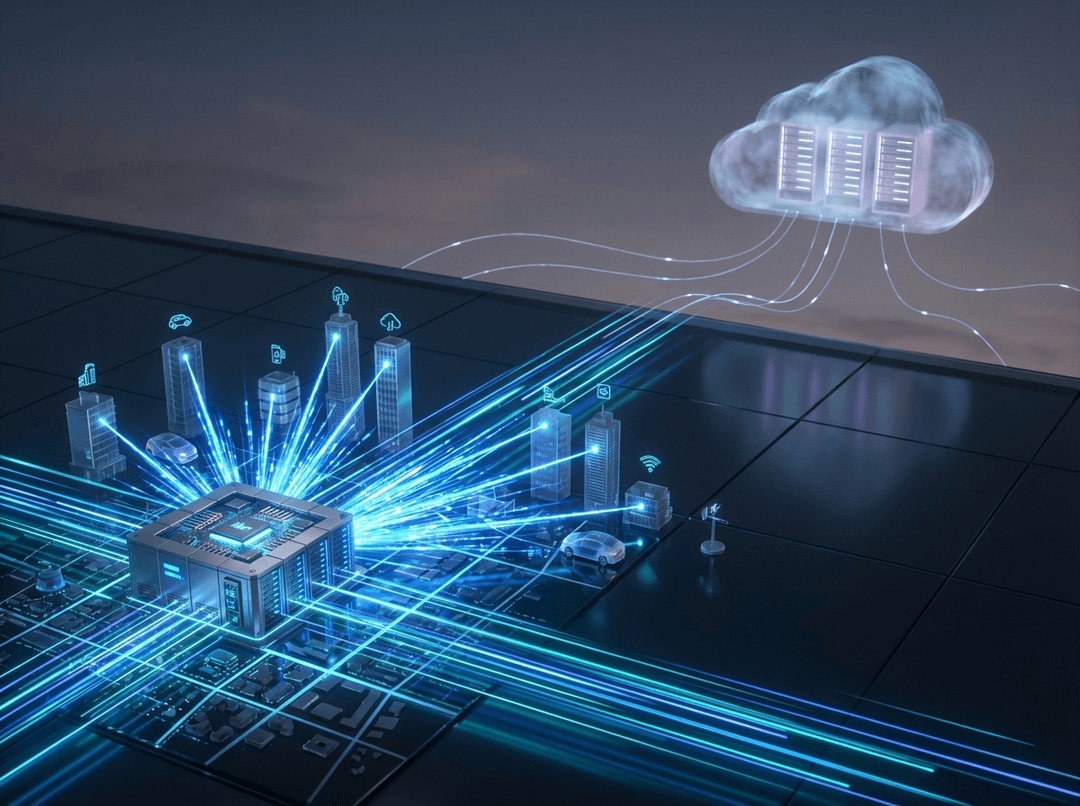

Enter edge data centers: smaller, strategically positioned facilities that bring computing power closer to where data is created and consumed. Think of them as neighborhood convenience stores compared to the distant warehouse superstores of traditional cloud computing.

What Makes Edge Data Centers Different

Edge data centers aren't just miniature versions of their cloud cousins—they're fundamentally rethinking where and how we process information.

Location, Location, Location

These facilities are deliberately placed in urban areas, near cellular towers, or even inside buildings where data-intensive applications operate. By reducing the physical distance data travels, they slash latency from 100+ milliseconds down to single digits. In 2024, global investment in edge infrastructure hit $430 billion, signaling that industries recognize this isn't optional anymore—it's essential.

Designed for Real-Time Processing

Unlike centralized clouds optimized for massive storage and batch processing, edge facilities prioritize immediate computation. They handle the "hot" data—information that needs instant analysis—while sending less time-sensitive data to central clouds for long-term storage and deeper analysis.

Where Edge Computing Changes Everything

The real magic happens when you see edge data centers in action across industries that can't afford to wait.

Autonomous Vehicles: No Time to Think Twice

Picture a self-driving car approaching an intersection. A child's ball rolls into the street. The vehicle's sensors capture this in real-time, but the decision to brake must happen in under 10 milliseconds. Sending that data to a distant cloud server, waiting for processing, and receiving instructions back would take far too long.

Edge computing processes sensor data locally, enabling split-second navigation and safety decisions that make autonomous driving viable. The car essentially has a powerful data center traveling with it, backed by nearby edge facilities that coordinate traffic patterns and road conditions.

Gaming and Streaming: The Competitive Edge

Competitive gamers know that lag kills—literally, in-game. Cloud gaming services like Xbox Cloud Gaming and NVIDIA GeForce NOW rely on edge data centers to deliver console-quality experiences without expensive hardware. When your character's response to a button press determines victory or defeat, those extra 50 milliseconds of latency aren't acceptable.

The same principle applies to live sports streaming and augmented reality applications, where synchronization between what's happening and what you're seeing must be nearly instantaneous.

Smart Cities and IoT: Managing Millions of Conversations

Modern cities are becoming nervous systems of sensors—traffic lights, security cameras, environmental monitors, and utility meters all generating data constantly. A single smart city might have millions of IoT devices creating petabytes of information daily.

Edge data centers process this flood locally, identifying patterns and triggering responses without overwhelming central networks. Traffic lights adjust in real-time based on actual conditions. Emergency services receive instant alerts from surveillance systems using AI-powered threat detection. All of this happens at the edge, where decisions matter most.

Healthcare: When Seconds Save Lives

Beyond remote surgery, edge computing enables real-time patient monitoring in hospitals and homes. Wearable devices track vital signs, and edge processing can detect anomalies instantly—alerting medical staff to a cardiac event before the patient even realizes something's wrong.

Medical imaging analysis, powered by AI at the edge, can highlight potential tumors or fractures for radiologists to review, dramatically speeding diagnosis without compromising patient privacy by sending sensitive data across long distances.

The Technology Behind the Speed

Edge data centers achieve their low-latency performance through several key innovations:

5G Integration: The rollout of 5G networks and edge computing are deeply intertwined. 5G's decentralized small cell architecture pairs perfectly with distributed edge facilities, enabling ultra-low latency for high device density scenarios.

AI at the Edge: Rather than sending raw data to the cloud for AI processing, edge facilities run machine learning models locally. This "inference at the edge" means smart cameras, industrial robots, and voice assistants can make intelligent decisions without round-trip delays.

Hybrid Architecture: Edge doesn't replace the cloud—it complements it. The smartest deployments use edge for immediate processing and central clouds for training AI models, long-term analytics, and backup storage. Data flows intelligently between layers based on urgency and importance.

The Challenges Nobody Mentions

Edge computing isn't a silver bullet. Distributing infrastructure across hundreds or thousands of locations creates new headaches:

- Management Complexity: Monitoring and maintaining numerous small facilities is harder than managing a few large ones

- Security Concerns: More locations mean more potential attack surfaces

- Cost Considerations: Building out edge infrastructure requires significant capital investment

- Standardization: The industry is still working out best practices and interoperability standards

Yet despite these challenges, the momentum is undeniable. As AI workloads increasingly shift from cloud to edge and hybrid deployments, organizations are prioritizing low-latency, localized processing.

What This Means for You

Even if you're not building autonomous vehicles or smart cities, edge computing is already touching your life. That instant response from your voice assistant, the smooth performance of your video call, the quick loading of location-based app features—edge data centers are working behind the scenes.

For businesses, the question isn't whether to adopt edge computing, but when and how. Applications requiring real-time responsiveness, handling sensitive data that shouldn't travel far, or serving users across dispersed locations are prime candidates for edge deployment.

The Future Is Distributed

We're witnessing a fundamental shift in computing architecture. For decades, the trend was centralization—bigger data centers, more powerful clouds. Edge computing doesn't reverse that trend; it adds a crucial layer that recognizes not all processing should happen in distant facilities.

The future of computing is neither purely centralized nor purely distributed—it's intelligently layered. Critical, time-sensitive processing happens at the edge. Complex analysis and long-term storage happen in the cloud. And the orchestration between these layers happens seamlessly, invisible to users who simply experience faster, more responsive applications.

As our world becomes more connected and more real-time, edge data centers aren't just delivering low-latency applications—they're making possible entirely new categories of technology we're only beginning to imagine.